AI

The Rise of Unit Economics in Machine Learning

AI workloads are rewriting the rules of cost management. From tokens to GPUs, FinOps teams are redefining what value per dollar really means.

Why it matters

AI is no longer a side project. It is becoming core infrastructure.

Flexera’s 2024 State of the Cloud report shows that machine learning and AI are one of the most actively used and experimented with platform services, with 41% of organizations already using ML/AI and many more planning or testing it. At the same time, managing cloud spend remains the top reported challenge for organizations.

Another recent global survey of IT leaders found that 94% of decision makers struggle with cloud costs and that many expect AI to become their top cost challenge within three years, as AI workloads add heavy, unpredictable compute and storage demands.

So the pressure is on. Finance, engineering, and product teams need a more precise way to answer a simple question:

What are we actually paying for when we run AI?

This is where unit economics comes in.

The new dimensions of AI cost tracking

AI workloads do not behave like traditional web apps or databases. They introduce new billing models and variables that need their own metrics and vocabulary.

1. Token based billing

Most foundation model APIs are priced per token, not per request.

OpenAI, for example, lists API usage per one million input and output tokens. GPT 5.1 input is priced at $1.25 per one million tokens, and output at $10 per one million tokens, with smaller and larger models priced accordingly. That means every prompt, every response, every context window is a line item.

If your product runs thousands or millions of prompts per day, those fractions of a dollar add up quickly. Without unit metrics like cost per prompt, cost per chat, or cost per user, it is almost impossible to forecast where that money is going.

2. GPU hour economics

Training and inference on custom or open models usually means GPU time.

Specialized instances are not cheap. Cloud pricing aggregators like Vantage show that an AWS p4d.24xlarge instance with Nvidia A100 GPUs starts at around 22 dollars per hour, while a p5.48xlarge with H100 GPUs is above 55 dollars per hour, before any discounts. Long running training jobs, large batch inference, or underutilized clusters can burn through budgets fast.

Even if discounts reduce the effective rate, the shape of the spend is the same: a lot of value is riding on very expensive hours.

3. Data movement and storage

AI is hungry for data. Training sets live in object storage, move across regions, and sometimes across clouds. Each of those paths has storage, API, and egress pricing attached.

You do not need exact numbers in the dashboard to feel the impact. You just need to see clearly which workloads generate the most data movement and which ones are actually worth that movement.

Put together, this means every model and every feature now has its own mini cost profile: tokens, GPU hours, storage, and egress.

Unit economics: redefining value

At its core, unit economics just means this: instead of asking “how much are we spending on AI,” you ask “how much does one useful thing cost.”

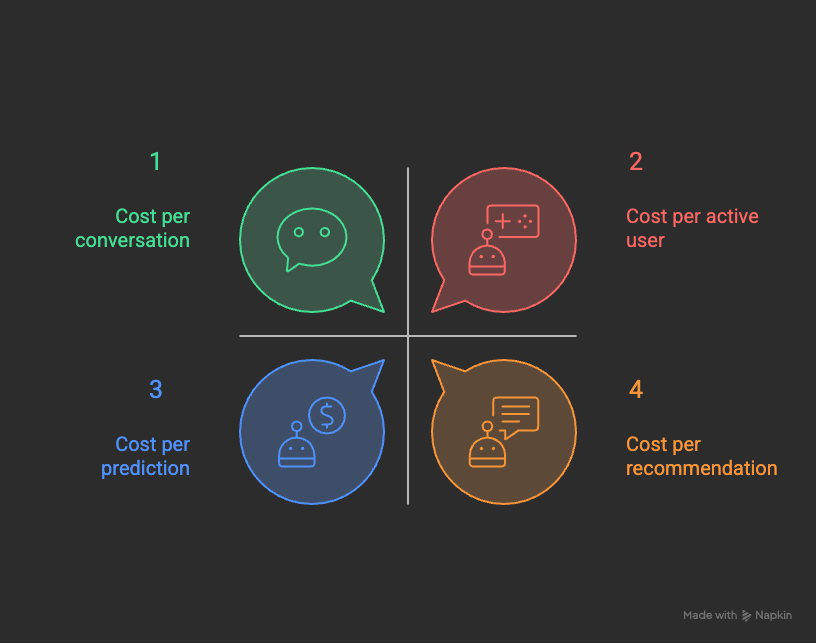

For AI, that might be:

A few quick examples:

A fintech team tracks cost per invoice categorized by an AI model

A SaaS team tracks cost per chat in their AI support assistant

A retail team tracks cost per product recommendation

Once you know those unit costs, you can stack them against revenue, margin, or retention. Then questions like “should we scale this model,” “should we optimize prompts,” or “should we switch providers” stop being vibes and start being math.

Predictive FinOps: AI watching AI

AI is not only generating spend, it is also helping watch it.

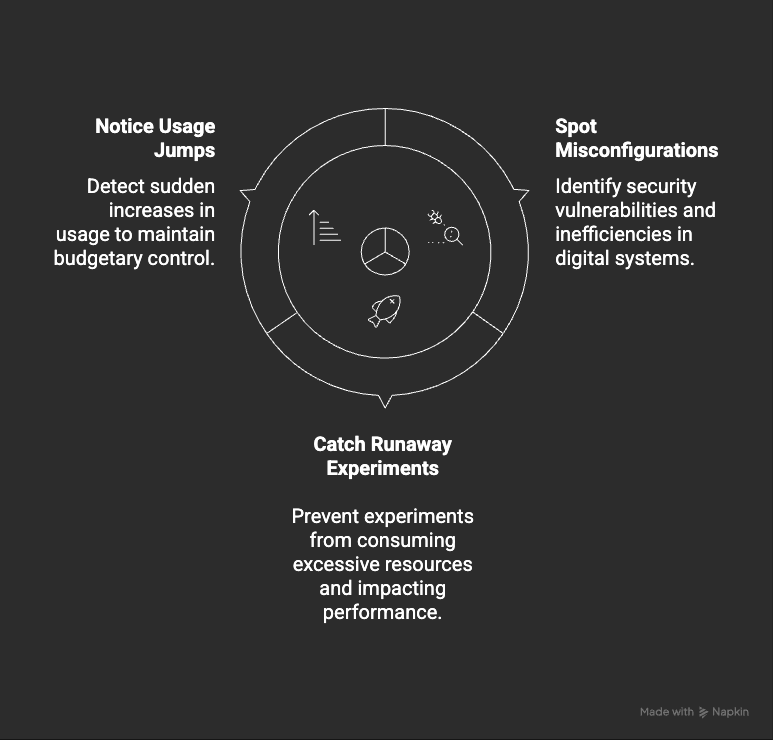

Most cloud providers now ship anomaly detection that uses machine learning to learn your normal spend and flag weird spikes. Even a basic setup can help you:

You do not need a perfect model here. You just need something checking the bill more often than a human realistically can.

Where FinOps really shows up:

This is where FinOps comes back into the center of the story.

Unit economics turns AI from “mysterious cost center” into something FinOps can actually govern:

you tie AI spend to business outcomes like revenue, margin, or customer experience

you use anomaly detection and simple forecasting as guardrails, not as after the fact cleanup

you bring finance, engineering, and product into the same conversation, looking at the same unit metrics

Instead of arguing about whether AI is “too expensive,” teams can ask a better question:

Is this feature worth its cost per prediction, per conversation, or per user?

That is FinOps thinking applied directly to AI, and it is how AI workloads stop being scary line items and start becoming intentional investments.

Find Your AI Flow

[optional reflection exercise]

If you are in the mood for a quick reflection:

Where is AI already flowing through your stack

Which feature or model would hurt the most if it suddenly stopped working

Do you know its cost per unit today

If the last answer is “not really,” that is a perfect candidate for your first AI unit economics experiment.

Introducing the first AI-native CRM

Connect your email, and you’ll instantly get a CRM with enriched customer insights and a platform that grows with your business.

With AI at the core, Attio lets you:

Prospect and route leads with research agents

Get real-time insights during customer calls

Build powerful automations for your complex workflows

Join industry leaders like Granola, Taskrabbit, Flatfile and more.

NEWS

The Burn-Down Bulletin: More Things to Know

FinOps For AI: How Crawl, Walk, Run Works For Managing AI Costs

CloudZero breaks down a maturity path for AI cost management, from basic visibility into GPU and token spend to more advanced unit economics like cost per customer or cost per feature.Unit Economics For AI SaaS Companies: A Survival Guide For CFOs

Drivetrain explains how token based pricing changes SaaS margins and shows how to track AI unit costs so finance teams can protect profitability as AI usage grows.Debunking The Myths Of AI Cost Management In The Cloud

Ternary tackles common myths about AI cost management and argues for clearer boundaries between experimentation and production, plus better visibility into GPU and AI service spend.FinOps For AI: 8 Steps To Managing AI Costs And Resources

Flexera offers a step by step checklist for managing AI costs, from tagging AI workloads and separating training vs inference to aligning AI usage with business value.Stop Asking What AI Costs, Ask If It Is Worth It

Another CloudZero piece that leans into value, encouraging teams to move from “what does AI cost” to “is this AI feature worth its unit cost” and connect AI spend directly to product strategy.

That’s all for this week. See you next Tuesday!