HubSpot's ex-Head of Paid shares his 2026 playbook

Rex Gelb spent a decade building HubSpot's paid engine. Now he's showing founders exactly how to do it.

On April 27th, get the framework to structure, launch, and scale paid media that drives pipeline, not just traffic. 20 minutes. Live Q&A. Free.

PROCUREMENT

AI Lock-In Is a Procurement Problem

There is a pattern that tends to show up around the 12-to-18 month mark of an enterprise AI deployment. Engineering has built something practical, the model is in production, users are getting value, and then procurement gets pulled into a renewal conversation and realizes the negotiating position has changed in ways nobody tracked while they were heads-down building.

This is where AI vendor lock-in becomes a financial problem. Not when someone flags it on a risk register, but when the renewal numbers are materially higher than the original deal and the cost of switching has become genuinely difficult to defend.

What makes this different from the cloud lock-in conversations of the past decade is not that the risk is new. It is that the mechanisms are faster, less visible, and almost entirely unmodeled in the original procurement process.

What is AI lock-in?

Vendor lock-in describes the condition where switching providers becomes expensive enough to constrain rational purchasing decisions at renewal time. In traditional SaaS, that cost is familiar: data migration, integration rebuilds, workflow re-implementation. Procurement knows how to reach for those levers.

AI lock-in accumulates differently because the dependency is not just in the data or the contract. It is in the model itself.

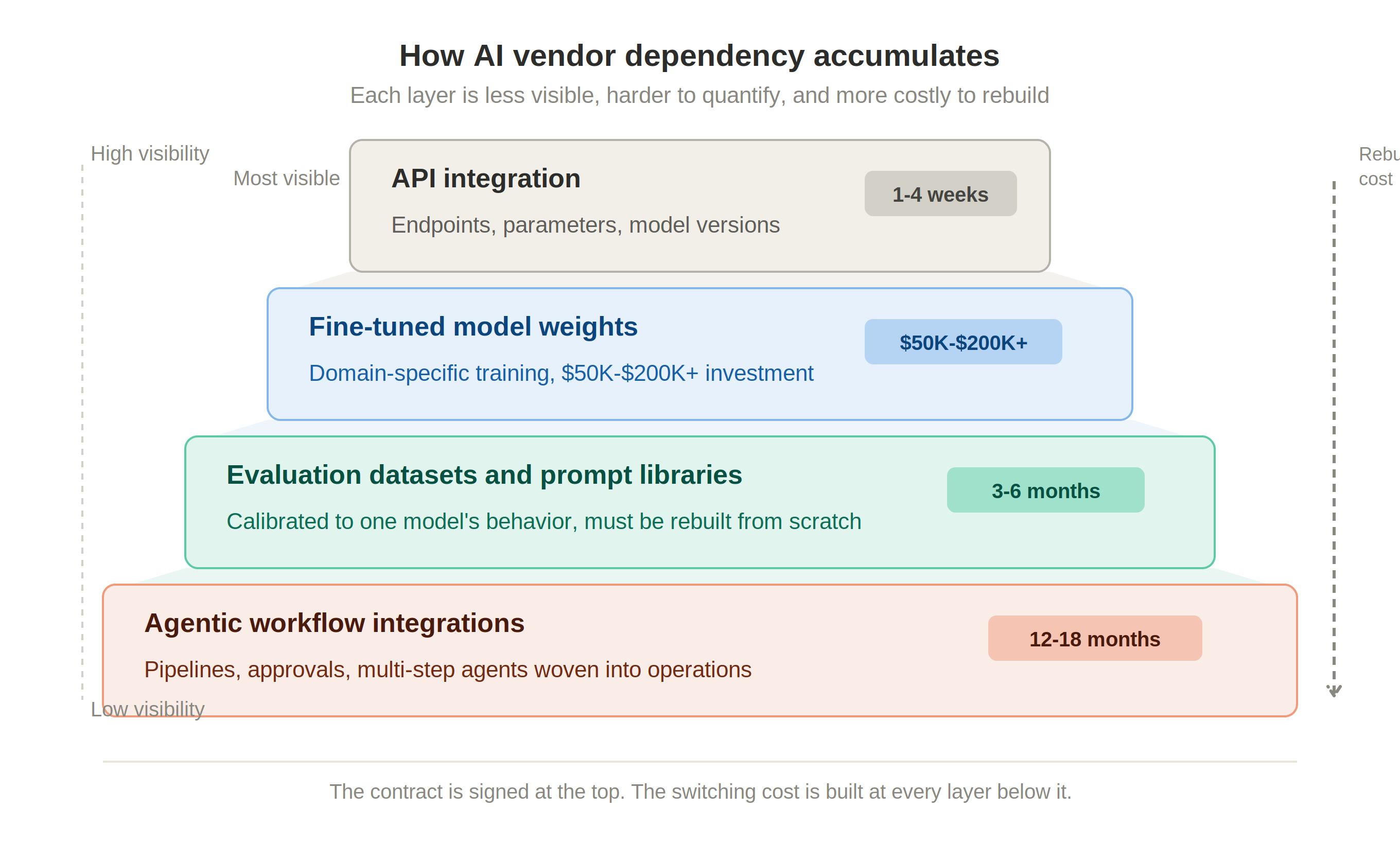

When an organization commits to a foundation model provider, whether OpenAI, Anthropic, Google, AWS Bedrock, or Azure OpenAI, several layers of dependency build at once. The API layer is the most visible: code gets written against a specific provider's endpoint. But the layers below are harder.

Fine-tuning is one of them. Fine-tuning takes a pre-trained model and continues its training on your domain's data, so it learns your terminology and task-specific patterns. Fine-tuning a domain-specific model adds $50,000 to $200,000 or more on top of existing infrastructure costs. That investment does not travel cleanly to a different provider. The weights belong to the infrastructure that produced them. If you switch, you are often starting that work over.

Then there are evaluation frameworks, prompt libraries, and agentic workflow integrations, each one an investment that ties the deployment more tightly to the provider. AI agreements that lack clear exit mechanics can leave the customer stranded if costs increase, performance degrades, or a provider's roadmap changes.

Why it develops faster than cloud lock-in did

Cloud infrastructure lock-in accumulated over years and was traceable in architecture diagrams. AI dependency is harder to see. A fine-tuned model is not a tagged resource in your cost management tool, a prompt library is not a procurement line item, and an evaluation framework does not appear in your FinOps dashboard.

There is also a pace problem: AI models evolve rapidly, and coupling to a single vendor's roadmap can leave organizations vulnerable. Most do not feel the heat of lock-in until they try to pivot. By the time procurement reviews the renewal, the technical team has often already committed to capabilities that do not exist in equivalent form elsewhere.

In a recent survey, 81 percent of organizations said they are at least somewhat concerned about their dependency on specific AI vendors. Nearly half cited data migration challenges and overdependence on a single vendor as primary risks, and 41 percent cited sudden price hikes. What is less clear is whether it is being addressed at the contract stage, before the dependency is built.

The financial risk that gets missed at signing

Enterprise AI contracts are structured around the easy numbers: API pricing per thousand tokens, committed usage tiers, seat-based licensing. Those are negotiable, and procurement is getting better at them. Usage-based pricing can scale unpredictably with adoption, so setting usage caps and renegotiation rights is important.

What often gets missed is the switching cost side of the ledger. In traditional SaaS, switching costs routinely exceed two to three times annual license cost, and migration projects for deeply embedded platforms typically run 12 to 18 months. AI deployments can get there faster because technical investment compounds more quickly than most contract review cycles catch.

There is also a pricing structure risk. AI inference is priced in ways that favor the provider as usage scales. Gartner forecasts LLM inference costs will drop over 90 percent by 2030, but enterprise buyers should not expect providers to pass through all savings. Agentic AI capabilities require significantly more tokens per task and will partially offset those reductions. If procurement modeled costs based on simple query volume and engineering is building multi-step agentic workflows, the financial model is already outdated.

What FinOps and procurement can do about it

FinOps can provide the framing that makes AI dependency visible before it becomes structural. Cost allocation tags can be extended to capture AI workload types (inference, fine-tuning, embedding, evaluation) and mapped to specific providers. Showback reporting can surface how much of the organization's AI investment is concentrated in a single provider's ecosystem, which is the precondition for a procurement conversation about risk.

Sophisticated buyers are beginning to ask at the contracting stage: if we needed to switch providers in 90 to 180 days, what would we need from the incumbent to make that possible? The answer involves defined transition periods, pre-agreed migration rates, and clear rights to prompts, evaluation datasets, and embedding indexes the customer created.

The leverage to negotiate those terms is highest at signing, when the provider wants the deal, and lowest at renewal, when the switching cost has already accumulated. FinOps and procurement working together (modeling lock-in exposure, establishing AI cost tracking, and surfacing the full cost of the deployment) paves the way for value.

This is not about avoiding deep commitment to an AI provider. It is about understanding what you are actually committing to when you sign, which is not just a price per token but a technical and financial relationship with a provider whose roadmap you do not control. That understanding is what makes the next renewal a negotiation.

Rex Gelb spent a decade building HubSpot's paid engine. Now he's showing founders exactly how to do it.

On April 27th, get the framework to structure, launch, and scale paid media that drives pipeline, not just traffic. 20 minutes. Live Q&A. Free.

RESOURCES

The Burn-Down Bulletin: More Things to Know

Building Exit Rights and Portability into AI Deals Morgan Lewis breaks down the contract provisions that preserve optionality when an AI relationship ends, including transition assistance, data export rights, and migration obligations.

Negotiating AI Provisions in Commercial and Technology Contracts A current look at how enterprise AI contracting is evolving and where sophisticated buyers are pushing back on standard vendor terms.

7 Best Practices to Avoid AI Vendor Lock-In TechTarget covers the technical and contractual dimensions of AI lock-in, including API dependency, data migration risk, and regulatory exposure.

LLM Development Cost: What Enterprises Budget in 2026 A grounded breakdown of what fine-tuning and RAG deployments actually cost at enterprise scale, useful context for modeling switching exposure.

That’s all for this week. See you next Tuesday!